A Protocol for Agent-Driven Interfaces¶

A2UI enables AI agents to generate rich, interactive user interfaces that render natively across web, mobile, and desktop—without executing arbitrary code.

Specification Versions¶

| Version | Status | Description |

|---|---|---|

| v1.0 | Candidate | Release candidate. Adds client-to-server RPC (actionResponse), action IDs, and renames theme to surfaceProperties. (Previously designated as v0.10 when in draft). Evolution guide → |

| v0.9.1 | Current | Current production release. Minor refinements to v0.9, standardizing on the application/a2ui+json MIME type and relaxing surfaceId constraints. Evolution guide → |

| v0.9 | Stable | Previous stable version. Philosophical shift to Prompt-First. Introduces createSurface, client-side functions, custom catalogs, modular schemas, and validation failed error formatting. Evolution guide → |

| v0.8 | Legacy | Legacy version. Structured Output first. Baseline surfaces, components, data binding, and adjacency list model. |

A2UI is Apache 2.0 licensed, created by Google with contributions from CopilotKit and the open source community, and is in active development on GitHub.

A2UI solves the following problem: how can AI agents safely send rich UIs across trust boundaries?

Instead of text-only responses or risky code execution, A2UI lets agents send declarative component descriptions that clients render using their own native widgets. It's like having agents speak a universal UI language.

This repository contains:

- A2UI specifications (v0.9.1 current, v1.0 candidate).

- Implementations for renderers (Angular, Flutter, Lit, Markdown, etc.) on the client side.

- Transports like A2A which communicate A2UI messages between agents and clients.

-

Secure by Design

Declarative data format, not executable code. Agents can only use pre-approved components from your catalog—no UI injection attacks.

-

LLM-Friendly

Flat, streaming JSON structure designed for easy generation. LLMs can build UIs incrementally without perfect JSON in one shot.

-

Framework-Agnostic

One agent response works everywhere. Render the same UI on Angular, Flutter, React, or native mobile with your own styled components.

-

Progressive Rendering

Stream UI updates as they're generated. Users see the interface building in real-time instead of waiting for complete responses.

Get Started in 5 Minutes¶

-

Quickstart Restaurant Finder Demo

Run the full-stack demo locally with a Gemini powered ADK agent and Lit renderer. Learn A2UI end-to-end and customize to your use case.

-

Scaffold a Next.js app wired to any agent framework via AG-UI. This is a React + A2UI app, ready to ship.

-

Generate A2UI JSON from a visual editor — no install required. Paste the output into any agent prompt.

-

Step through pre-built A2UI streaming scenarios across Lit, React, and Angular renderers. See the protocol in motion before writing code.

-

Understand surfaces, components, data binding, and the adjacency list model.

-

Integrate A2UI renderers into your app or build agents that generate UIs.

-

Protocol Specifications

Dive into the complete technical specs: v0.8 (legacy) · v0.9 (stable) · v0.9.1 (current) · v1.0 (candidate)

How It Works¶

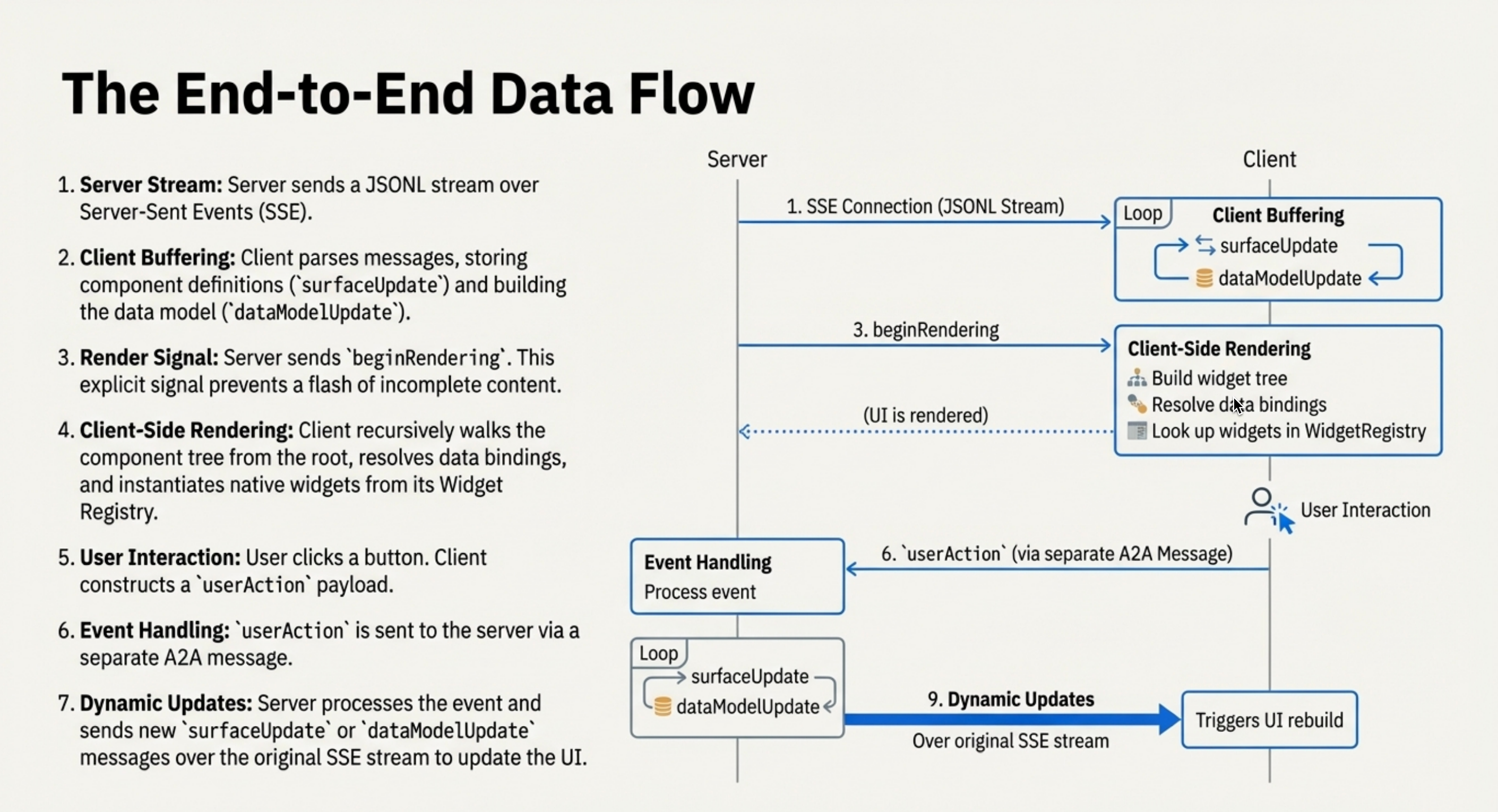

The typical interaction flow consists of these steps:

- User sends a message to an AI agent

- Agent generates A2UI messages describing the UI (structure + data)

- Messages stream to the client application

- Client renders using native components (Angular, Flutter, React, etc.)

- User interacts with the UI, sending actions back to the agent

- Agent responds with updated A2UI messages

A2UI in Action¶

Landscape Architect Demo¶

Watch an agent generate all of the interfaces for a landscape architect application. The user uploads a photo; the agent uses Gemini to understand it and generate a custom form for landscaping needs.

Custom Components: Interactive Charts & Maps¶

Watch an agent chose to respond with a chart component to answer a numerical summary question. Then the agent chooses a Google Map component to answer a location question. Both are custom components offered by the client.

A2UI Composer¶

CopilotKit has a public A2UI Widget Builder to try out as well.